History loves a breakthrough, but it rarely sticks around for the follow-up interview. Again and again, inventors and tech leaders built tools that changed daily life, only to watch those tools drift into consequences they never fully intended.

From late 19th century laboratories to Silicon Valley growth teams, this list tracks the uneasy moment when innovation met reality. Keep reading and you will see how ambition, speed, and human behavior turned clever ideas into cautionary tales their creators could not ignore.

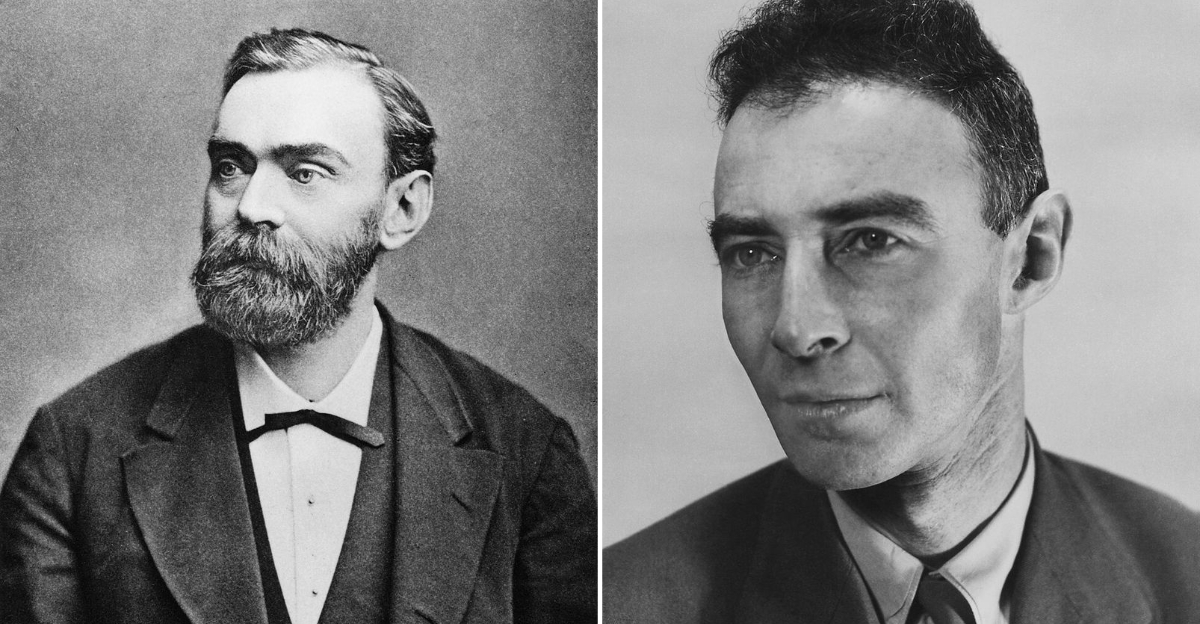

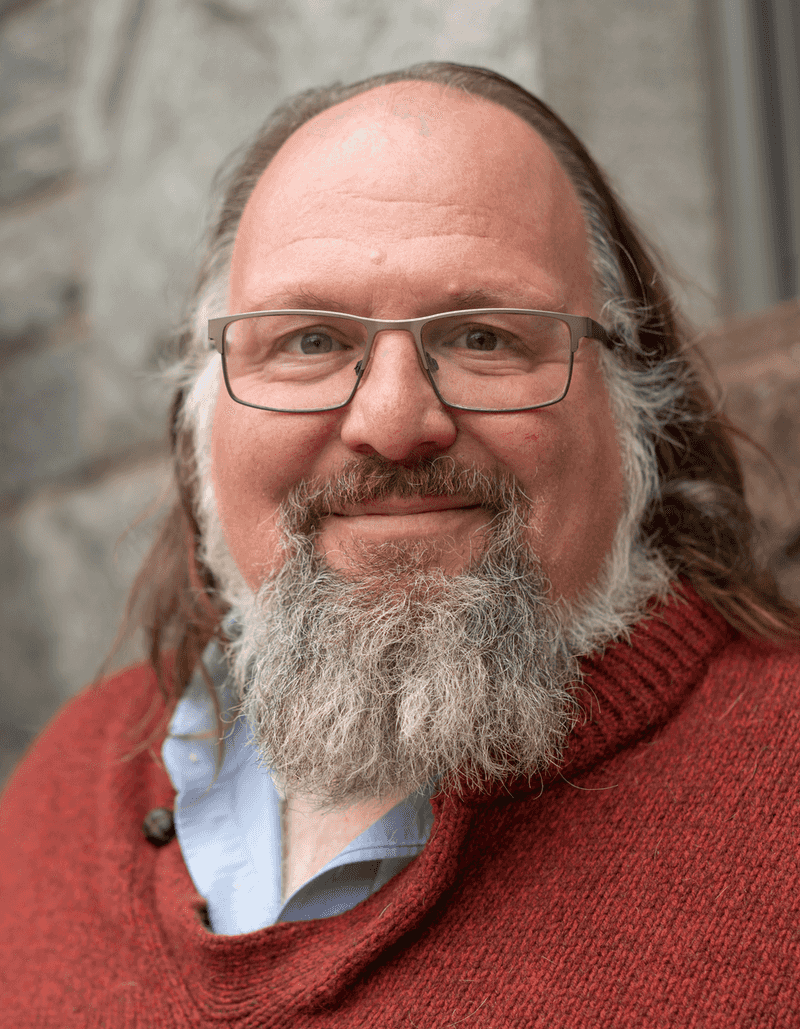

1. Tim Berners-Lee – World Wide Web

The web began as an open information system, not a blueprint for chaos, manipulation, or giant walled platforms. Tim Berners-Lee created the World Wide Web in 1989 to help researchers share information more easily, and the idea quickly reshaped education, media, commerce, and everyday communication.

For years, Berners-Lee remained optimistic about the web’s democratic potential. Over time, though, he repeatedly warned that misinformation, invasive data practices, and platform concentration were distorting the original vision.

The open network he imagined had become increasingly mediated by a handful of powerful companies.

His concern is best understood as disappointment rather than full rejection. He still argues that the web can be improved through stronger standards, better governance, and technologies that return more control to users.

That makes his case especially interesting. Instead of walking away from his creation, he has spent years trying to rescue it from its own success.

When one of the internet’s founding figures says the system needs repair, it lands differently. Berners-Lee’s warnings remind you that open tools can still drift toward centralization if convenience keeps beating accountability.

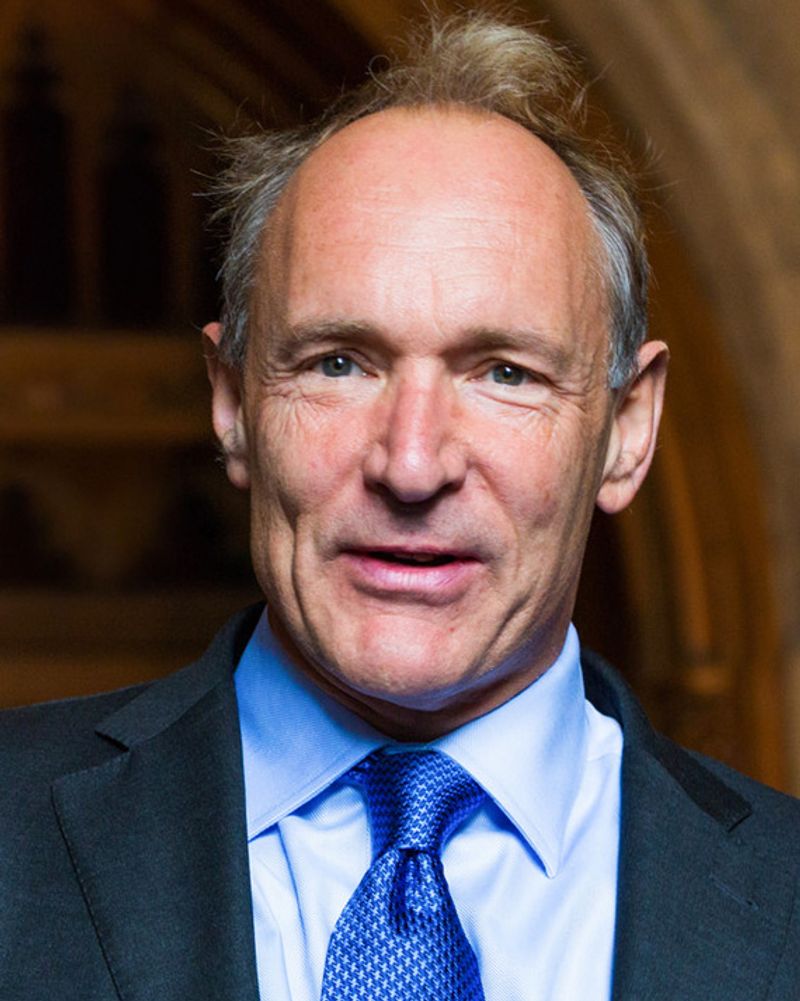

2. J. Robert Oppenheimer – Atomic Bomb

Few scientific achievements arrived with a heavier afterthought than this one. J.

Robert Oppenheimer helped lead the Manhattan Project during World War II, turning advanced physics into a weapon that permanently changed military strategy and global diplomacy.

At the time, the project was driven by urgency, secrecy, and fear that another power might get there first. After the war, Oppenheimer became increasingly troubled by what nuclear weapons meant for humanity, public policy, and the future of scientific responsibility.

He did not reject science itself, but he questioned the path from discovery to deployment when consequences moved faster than ethics. His later opposition to expanded nuclear arms development complicated his public standing, yet it also made him one of history’s clearest examples of a brilliant mind reassessing its own work.

When you look back at twentieth century innovation, his story stands as a reminder that technical success and moral comfort do not always arrive together.

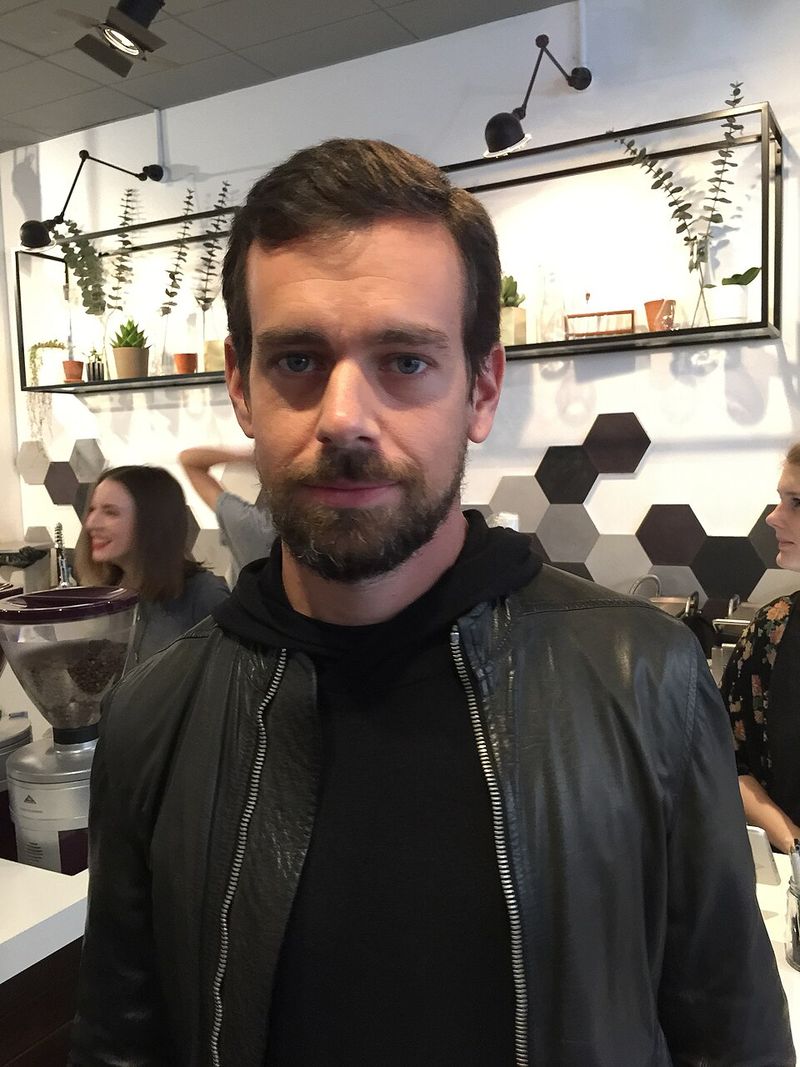

3. Jack Dorsey – Twitter

A platform built for quick updates turned into a global pressure cooker with very little space for nuance. Jack Dorsey co-founded Twitter, a service that transformed journalism, politics, celebrity culture, sports chatter, and public commentary by making short posts central to real-time conversation.

That speed became the feature and the problem. Twitter could spread eyewitness reports and breaking news quickly, but it could also reward pile-ons, harassment, distorted context, and impulsive reactions.

Dorsey later acknowledged ongoing concerns about platform control, moderation, and the way online discourse unfolded at scale.

His relationship with Twitter often seemed caught between idealism and frustration. He promoted decentralization ideas and showed interest in systems that might reduce dependence on one company’s rules, a notable stance from someone who helped build one of the most influential centralized conversation platforms on earth.

Dorsey’s later comments did not amount to a simple apology, but they clearly reflected unease with what the service had become. For many users, Twitter felt like a public square designed by people who underestimated how quickly incentives, algorithms, and social pressure could turn conversation into performance.

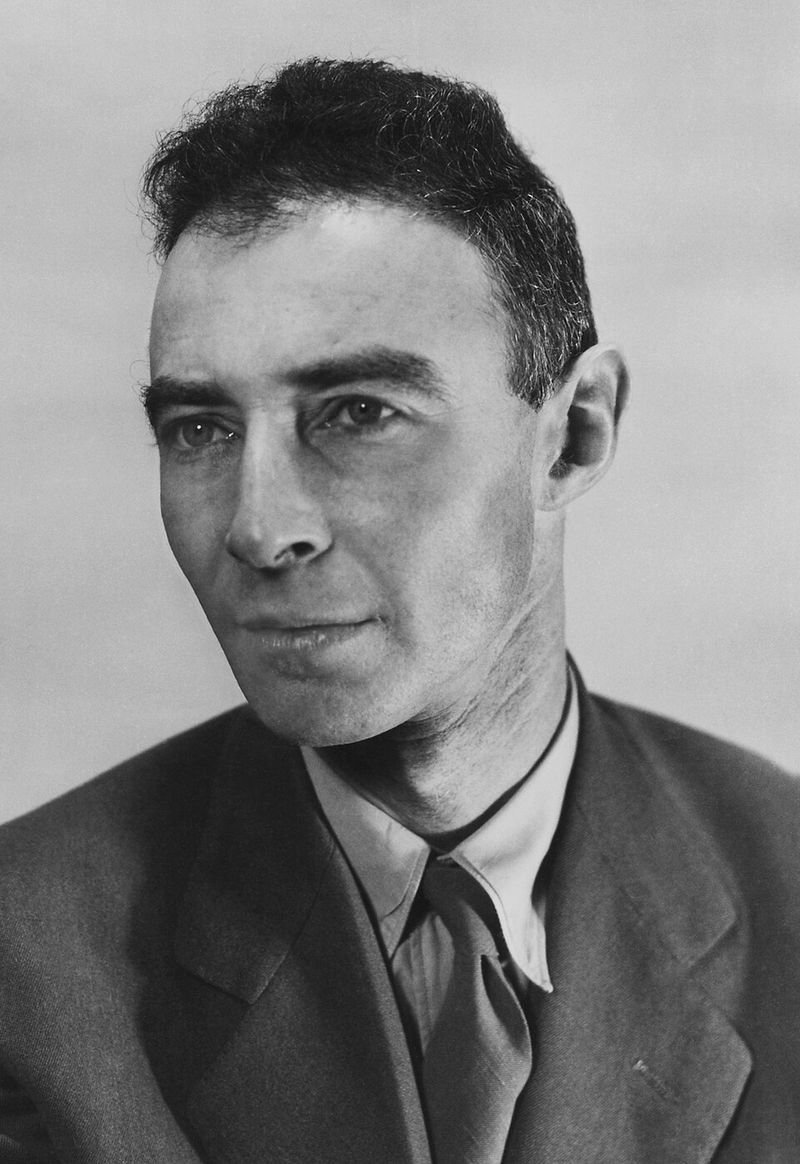

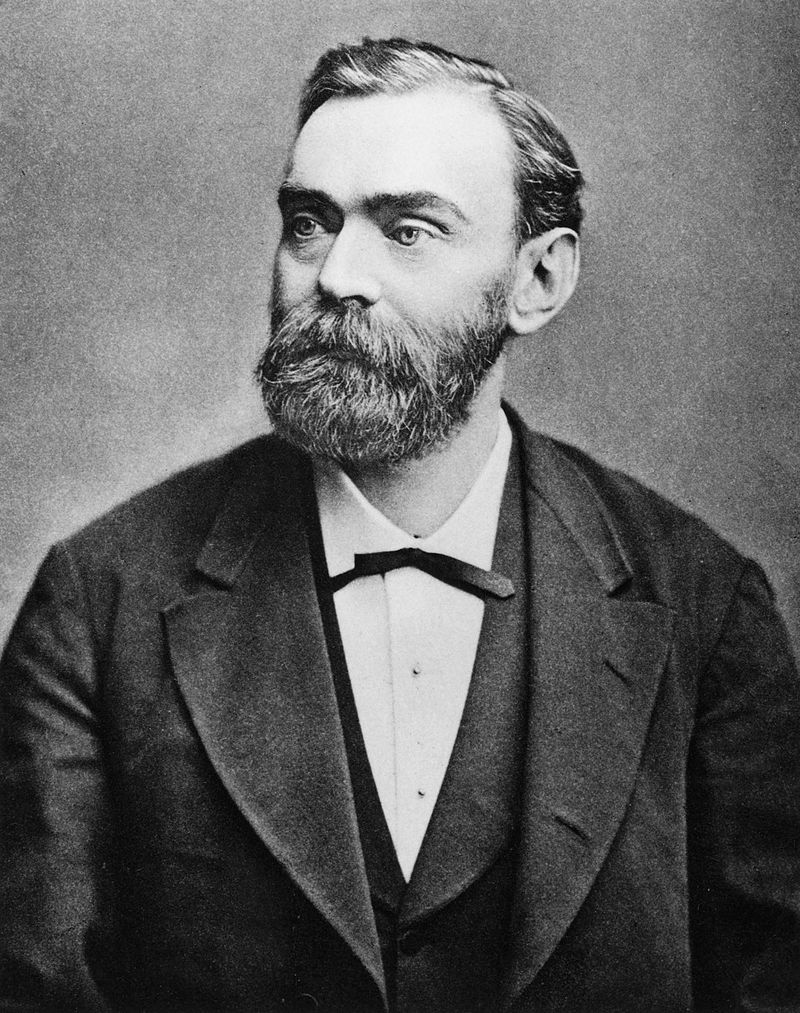

4. Alfred Nobel – Dynamite

The man behind one of industry’s most useful inventions ended up worrying about its reputation. Alfred Nobel invented dynamite in 1867, creating a safer and more stable explosive for mining, construction, and large engineering projects that previously relied on less predictable materials.

The invention was commercially successful and technically important, helping tunnels, roads, and rail lines move forward faster. Yet Nobel also watched dynamite become associated with warfare and destructive uses far beyond the practical industrial role he had imagined.

That tension appears to have shaped how he wanted his legacy understood. After reading a premature obituary that framed him harshly, Nobel directed much of his fortune toward prizes honoring achievements in science, literature, and peace.

Whether the obituary alone changed his thinking or merely confirmed concerns he already had, the result was remarkable: an inventor tried to rebalance his public image by rewarding human progress. His story still feels current because it captures a familiar problem.

Once a technology leaves the lab, its inventor no longer controls the script.

5. Sean Parker – Facebook Growth Systems

Silicon Valley rarely hands out medals for saying the quiet part out loud, but Sean Parker did. An early Facebook president, Parker later described how social platforms were designed to keep users engaged through small hits of social validation and constant return visits.

That admission mattered because it pulled the curtain back on a business model many people felt but could not quite name. Features that looked harmless, like likes, comments, and notifications, were not random conveniences.

They were deliberate loops built to capture attention and extend time on the platform.

Parker’s later comments suggested discomfort with how effectively those systems shaped behavior, especially for younger users. He was not alone in the industry, but he became one of the most widely quoted insiders willing to explain the logic plainly.

That honesty turned his story into more than a footnote in Facebook history. It became a case study in how product design, advertising incentives, and human psychology can reinforce one another until a social network stops feeling like a tool and starts behaving like a habit.

6. Chamath Palihapitiya – Facebook Expansion

There is something striking about a former growth executive openly criticizing the machine he helped scale. Chamath Palihapitiya played a key role in Facebook’s expansion and later spoke bluntly about the broader social damage he believed certain online engagement patterns could create.

His remarks stood out because they came from someone who understood platform mechanics from the inside. He was not discussing abstract fears.

He was describing how rapid user growth, feedback loops, and algorithmic amplification could reward outrage, weaken trust, and distort public conversation.

Palihapitiya did not claim that social media was inherently bad in every form, but he questioned the incentives behind major platforms and the behavioral outcomes they produced. That distinction is important.

Regret in tech often sounds less like a total rejection and more like a sober update after years of real-world evidence. In his case, the evidence included polarization, compulsive use, and the flattening of complex discussion into shareable fragments.

His later criticism became part of a wider reckoning over whether growth at any cost was ever a sensible philosophy for tools used by billions.

7. Tristan Harris – Persuasive Tech Design

Sometimes the most revealing critics are the people who once helped tune the system from the inside. Tristan Harris worked in design roles at Google and later became one of the leading public voices warning about persuasive technology and the competition for human attention.

His critique focused less on a single app and more on an entire design philosophy. Many digital products, he argued, were built to exploit predictable psychological triggers, nudging users toward endless scrolling, repeated checking, and behavior that benefited platforms more than the people using them.

What made Harris influential was his ability to translate design language into everyday terms. He explained how convenience could quietly become compulsion when incentives rewarded time spent rather than user wellbeing.

That message found an audience as screen time climbed and concerns about digital fatigue moved from niche debate to common conversation. Harris did not invent persuasive design alone, of course, but he became closely associated with exposing its logic and challenging the industry to rethink it.

His later advocacy showed that technical skill can evolve into ethical criticism when creators decide the interface is telling a more troubling story than the marketing ever did.

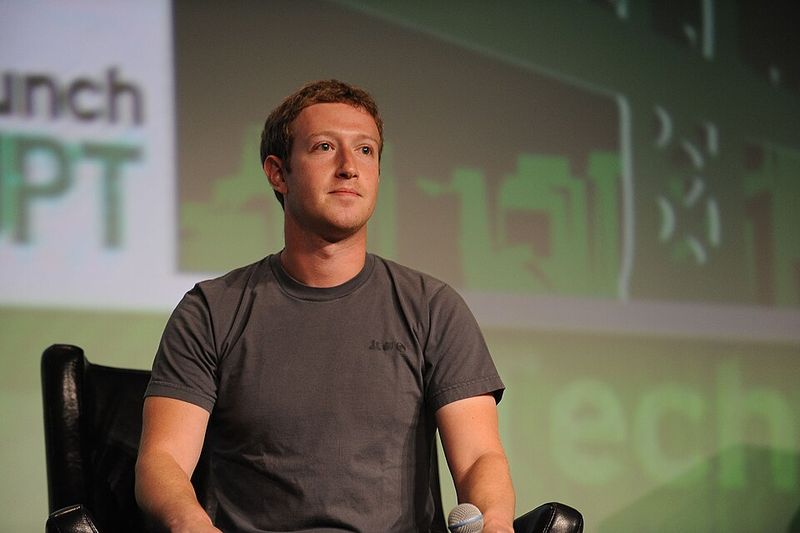

8. Mark Zuckerberg – Facebook

What starts as a campus networking tool can become a policy headache for half the planet remarkably fast. Mark Zuckerberg co-founded Facebook in 2004, and within a few years it had changed how people shared photos, followed events, consumed news, and maintained social ties.

As the platform expanded, criticism followed. Privacy issues, misinformation, content moderation disputes, and concerns about algorithmic influence pushed Facebook far beyond the casual college origins people still associate with its early years.

Zuckerberg’s public stance gradually shifted from pure growth language toward repeated promises to fix what had gone wrong.

That does not amount to a sweeping confession, but it does show recognition that the platform’s impact was far messier than its founding story suggested. The challenge for Zuckerberg has been that Facebook’s biggest strengths, scale, personalization, and frictionless sharing, are closely tied to the problems critics identify.

Trying to correct one without weakening the others is a difficult engineering and governance puzzle. His later efforts to address privacy and harmful content reflect a familiar pattern in tech history: creators often discover that once a product becomes infrastructure, every design choice starts carrying social consequences the original prototype never had to answer for.

9. Vitalik Buterin – Ethereum

Crypto’s most thoughtful critic sometimes sounds like one of its main architects, because he is. Vitalik Buterin co-founded Ethereum in 2015 as a programmable blockchain intended to support smart contracts and decentralized applications far beyond simple digital currency transfers.

Ethereum opened the door to new experiments in finance, governance, digital ownership, and online coordination. It also became a magnet for speculation, hype cycles, dubious projects, and scams that often overwhelmed serious technical work.

Buterin has repeatedly criticized those excesses, even while continuing to develop the ecosystem.

That tension defines his place in tech history. He has not disowned Ethereum, but he has pushed back against the loudest parts of crypto culture, especially when short-term profits eclipse useful applications.

His comments on fees, sustainability, governance, and reckless speculation suggest an inventor trying to keep a platform from being reduced to its most chaotic use cases. In a field that often rewards certainty and slogans, Buterin’s willingness to admit tradeoffs feels unusual.

It shows that regret is not always about abandoning a creation. Sometimes it looks like staying involved while publicly arguing that too many people are using the tool for the least interesting reasons.

10. Geoffrey Hinton – Artificial Intelligence

The person nicknamed a godfather of AI became one of its most unsettling public skeptics. Geoffrey Hinton’s work on neural networks was foundational to modern artificial intelligence, especially the deep learning systems that now power image recognition, language tools, and many commercial applications.

For years, his research sat in the background while computing power and data slowly caught up. Once AI capabilities accelerated, Hinton began speaking more openly about the risks, including misinformation, job disruption, and the possibility that highly capable systems could outpace society’s ability to manage them responsibly.

His concerns carried unusual weight because they came from someone whose work helped make the current moment possible. Hinton did not suddenly decide machine learning was useless.

He worried that progress was moving so quickly that safeguards, institutions, and public understanding might lag behind. That difference matters.

His message was not anti-science. It was a warning about speed, incentives, and overconfidence.

In tech history, that is a recurring pattern: a field spends decades trying to make something work, then has very little time to decide how the thing should fit into everyday life once it finally does.

11. Ethan Zuckerman – Pop-Up Ads

The internet found many ways to test human patience, and pop-up ads quickly reached the finals. Ethan Zuckerman wrote the code for one of the earliest pop-up ad formats in the late 1990s while working at Tripod, trying to solve a then-practical problem in web publishing.

Advertisers wanted visibility without appearing directly beside page content they might consider inappropriate for their brands. The pop-up offered separation, but it also created a browsing experience people would spend years trying to block, close, dodge, and complain about with impressive consistency.

Zuckerman later apologized for the invention, making him one of the clearest examples of a creator publicly acknowledging that a useful business fix had turned into a cultural nuisance. The story is almost funny until you remember how deeply pop-ups shaped online habits.

They influenced browser tools, ad-blocking software, page design conventions, and the general level of suspicion users developed toward anything flashing in a corner. His regret captures a larger truth about internet history: small design decisions made under short-term commercial pressure can linger for decades, long after the original rationale has stopped sounding reasonable.

12. Robert Propst – Office Cubicles

The cubicle began as a flexible office idea, not a punchline about fluorescent paperwork and captive staplers. Robert Propst designed the Action Office system for Herman Miller in the 1960s, aiming to create a more adaptable, efficient workspace that supported different tasks and improved employee movement.

His concept was more sophisticated than the cubicle farms that later filled corporate buildings. Action Office used modular components, varying heights, and open possibilities for customization.

Businesses, however, often reduced the idea to dense, standardized partitions that maximized floor space and minimized individuality.

Propst later criticized what the office had become, calling out the way companies turned a thoughtful design system into rigid rows of semi-private boxes. That distinction matters because the modern cubicle was not simply his vision carried out faithfully.

It was his idea simplified by cost cutting and managerial convenience. Still, his name remains attached to one of the most mocked workplace forms of the late twentieth century.

If you have ever wondered how a design meant to improve office life became shorthand for corporate monotony, Propst’s story explains it neatly: invention is one thing, implementation is another, and accounting departments usually get the last word.