Queen Elizabeth II was born on April 21, 1926, and lived to see 96 remarkable years of history. During her lifetime, the world transformed in ways that would have seemed like pure science fiction to her parents.

From the microwave oven to the World Wide Web, some of the most iconic inventions ever created came after she was already walking, talking, and wearing tiny royal shoes. Here are 15 world-changing inventions that Queen Elizabeth II was actually older than.

The Microwave Oven (1945)

Percy Spencer did not set out to reinvent cooking. He was working on radar equipment in 1945 when he noticed a chocolate bar in his pocket had melted near a magnetron.

That happy accident led to the first patented microwave oven, which was taller than most adults and cost around $5,000.

Queen Elizabeth II was already 19 years old and serving in the British Auxiliary Territorial Service when Spencer filed his patent. The early microwave was nicknamed the Radarange and was nothing like the countertop box we nuke leftovers in today.

It weighed 750 pounds. Seven hundred and fifty pounds.

By the 1970s, smaller home models finally arrived, and reheating coffee became a universal human experience. I genuinely cannot function before my morning cup, so I owe Percy Spencer a personal debt of gratitude.

The microwave quietly became one of the most used appliances on Earth.

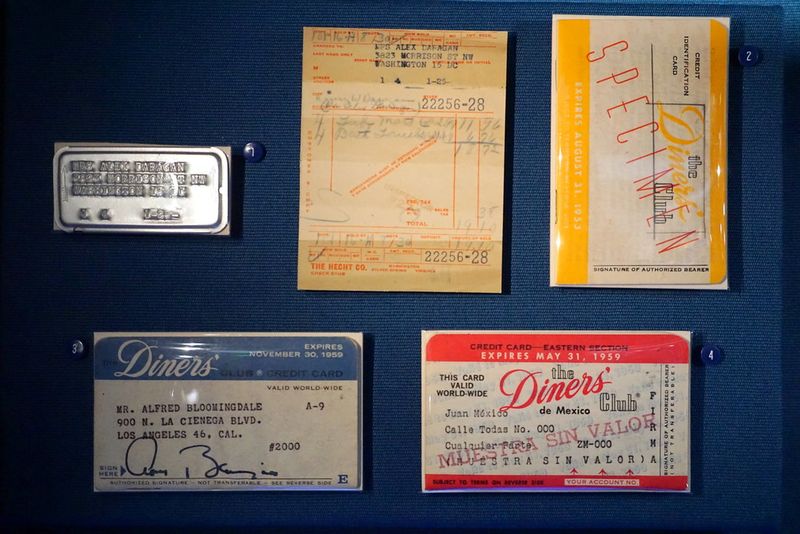

The Diners Club Charge Card (1950)

Frank McNamara forgot his wallet at a fancy New York restaurant in 1949 and felt deeply embarrassed. That awkward dinner led him to launch the Diners Club card in 1950, the first widely used multipurpose charge card accepted at multiple businesses.

No more wallet panic at the checkout.

Queen Elizabeth II was 24 when this little cardboard rectangle changed the financial world forever. The original card was made of paper and had just 27 restaurants on its acceptance list.

About 200 people signed up in the first year, which is modest by today’s standards but revolutionary for the era.

Calling it a credit card is technically a stretch since the full balance had to be paid monthly. It was a charge card, pure and simple.

Still, it planted the seed for the entire plastic money industry. Today there are over 2.8 billion credit cards in circulation worldwide.

McNamara’s embarrassing dinner paid off enormously.

Modern Color Television Standards (1953)

Color television did not just magically appear one day in full technicolor glory. The real turning point came in 1953 when the NTSC color standard was approved in the United States, making color broadcasts compatible with existing black-and-white sets.

That compatibility detail was the game-changer nobody talks about enough.

Queen Elizabeth II was 27 years old that year, and interestingly, her own coronation in June 1953 was one of the first major events broadcast on British television. Millions watched in black and white, but color was already knocking at the door.

The timing feels almost poetic.

Early color sets were wildly expensive, so most families did not own one until the 1960s. NBC pushed hard for color programming to sell more sets, since RCA, its parent company, manufactured them.

It was capitalism and technology holding hands and skipping together. Color TV eventually became the universal standard and transformed entertainment permanently.

The First Practical Silicon Solar Cell (1954)

Bell Labs scientists Daryl Chapin, Calvin Fuller, and Gerald Pearson unveiled something extraordinary in April 1954: a silicon solar cell that could actually convert sunlight into usable electricity efficiently. Before this, solar cells existed but were so inefficient they were basically decorative.

This one worked at about 6% efficiency, which sounds modest but was a genuine breakthrough.

The Queen was 28 that year and had been on the throne for just two years. Meanwhile, three scientists in New Jersey were quietly laying the foundation for the entire solar energy industry.

The New York Times called it the beginning of a new era, which turned out to be completely accurate for once.

Today, solar panels power homes, satellites, and entire cities. The global solar market is worth hundreds of billions of dollars annually.

From a lab experiment in 1954 to rooftop panels everywhere, the journey took decades but arrived in spectacular fashion. Clean energy owes everything to that sunny afternoon at Bell Labs.

The Hovercraft (1955-1956)

Christopher Cockerell tested his hovercraft concept using a cat food tin, a coffee can, and an industrial fan. That gloriously low-budget experiment in 1955 proved that trapping a cushion of air under a vehicle could lift it off surfaces entirely.

His patent followed in 1956, and the modern hovercraft was born.

Queen Elizabeth II was 29 or 30 during those key development years, already deep into her reign. The first full-scale passenger hovercraft, the SR.N1, crossed the English Channel in 1959 and caused a sensation.

People genuinely could not believe a vehicle was gliding across water without touching it.

Hovercrafts went on to serve as military vehicles, passenger ferries, and rescue craft. The cross-channel hovercraft service ran commercially until 2000.

There is something wonderfully British about the fact that one of the most futuristic vehicles ever invented was developed using kitchen tins. Cockerell deserved every knighthood he received, and he got one in 1969.

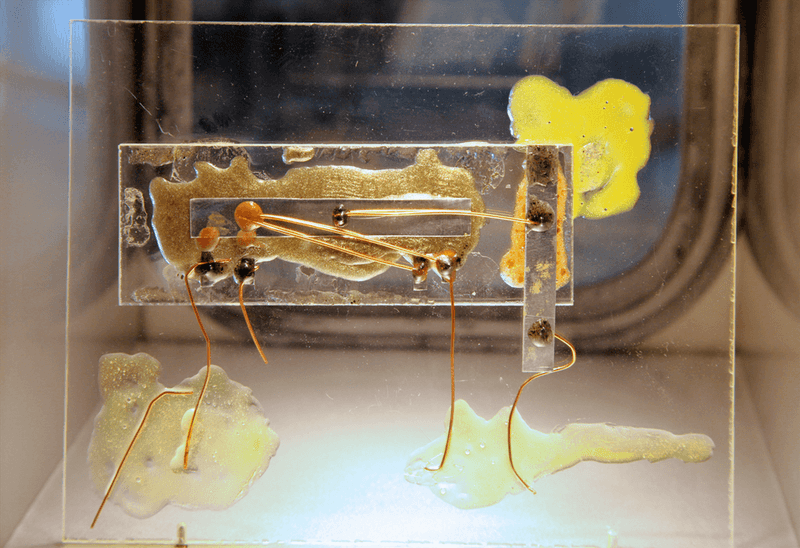

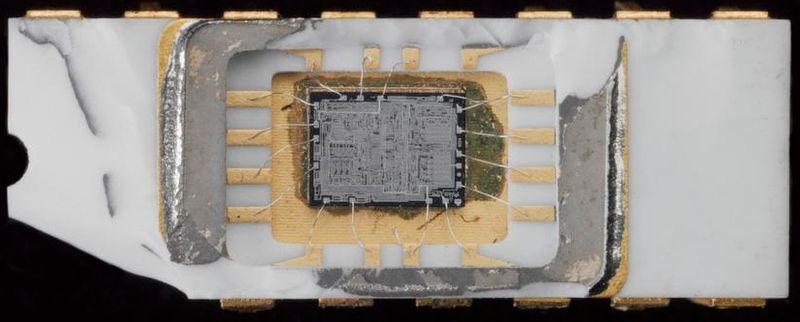

The Integrated Circuit (1958)

Jack Kilby had only been at Texas Instruments for a few months when he had one of the most important ideas in the history of technology. In the summer of 1958, while most colleagues were on vacation, he sketched out and then built the first integrated circuit on a tiny piece of germanium.

He won the Nobel Prize in Physics in 2000 for that summer of thinking.

Queen Elizabeth II was 32 at the time, and the world had no idea what was coming. The integrated circuit meant that transistors, resistors, and capacitors could all live on one small chip instead of being wired together separately.

Electronics shrank almost overnight as a result.

Without the integrated circuit, there are no personal computers, no smartphones, no modern medicine, and honestly no internet. Every digital device you own traces its lineage directly back to Kilby’s quiet summer project.

One chip to rule them all, basically. Technology has never been the same since that humid Texas afternoon.

The Laser (1960)

Theodore Maiman built the first working laser on May 16, 1960, using a synthetic ruby rod and a photographer’s flash lamp. His colleagues at Hughes Research Laboratories called it a solution looking for a problem.

They were spectacularly wrong. The laser turned out to be one of the most versatile inventions in human history.

Queen Elizabeth II was 34 that year, and the word laser had only just been coined as an acronym: Light Amplification by Stimulated Emission of Radiation. Try fitting that on a birthday card.

Maiman’s device produced a deep red beam that became the prototype for everything that followed.

Today lasers perform eye surgery, read barcodes, cut steel, transmit data through fiber-optic cables, and power Blu-ray players. They are in dentist offices, factories, concert stages, and military systems.

I had laser eye surgery a few years ago and it took about 90 seconds per eye. The fact that this all started with a ruby and a camera flash still blows my mind.

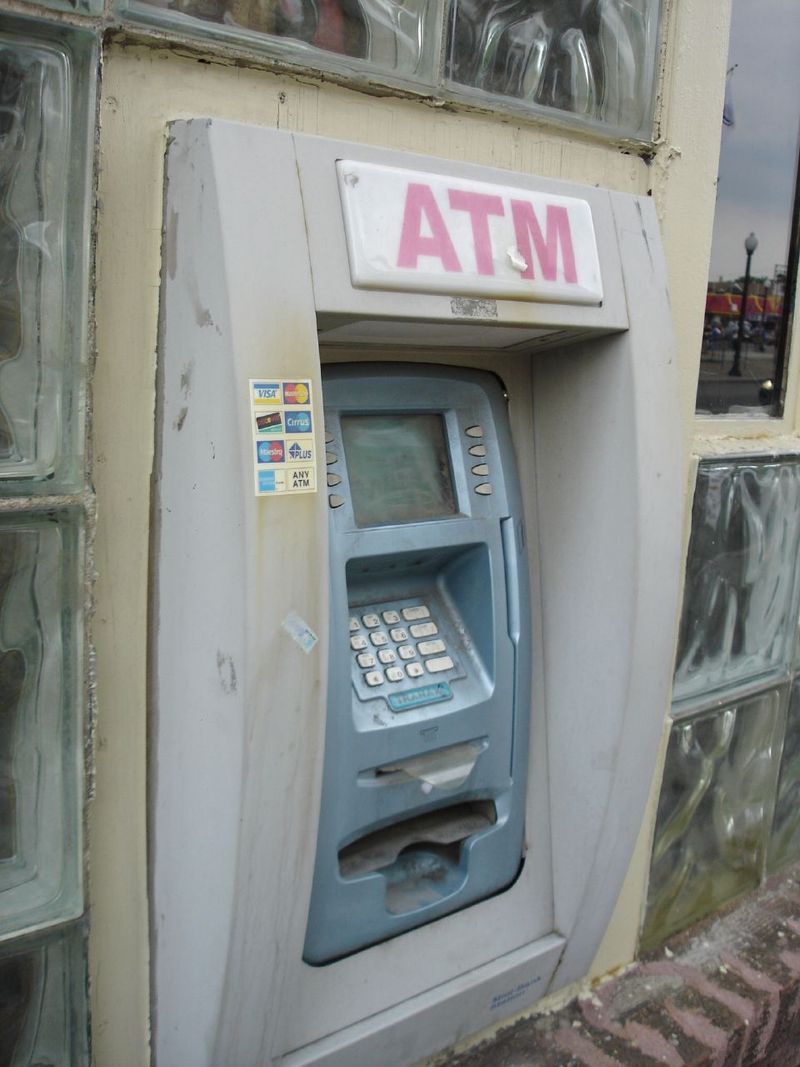

The First ATM / Cash Machine (1967)

On June 27, 1967, Barclays Bank installed the world’s first cash machine at its branch in Enfield, north London. The first person to use it was British comedy actor Reg Varney, which is a fact so wonderfully random that no screenwriter could have invented it.

The machine dispensed pre-loaded vouchers rather than directly reading a card.

Queen Elizabeth II was 41 that year, and banking was about to change forever. Before ATMs, getting cash meant standing in a queue during banker’s hours, which were notoriously short and inconvenient.

The cash machine meant money was suddenly available at any hour, any day. Revolutionary does not even cover it.

There are now roughly 3 million ATMs operating worldwide. The PIN system that secures them was invented by John Shepherd-Barron, who originally wanted a six-digit code but settled for four after his wife said six was too many to remember.

Four digits have protected bank accounts ever since. We have one woman’s memory to thank for that particular security standard.

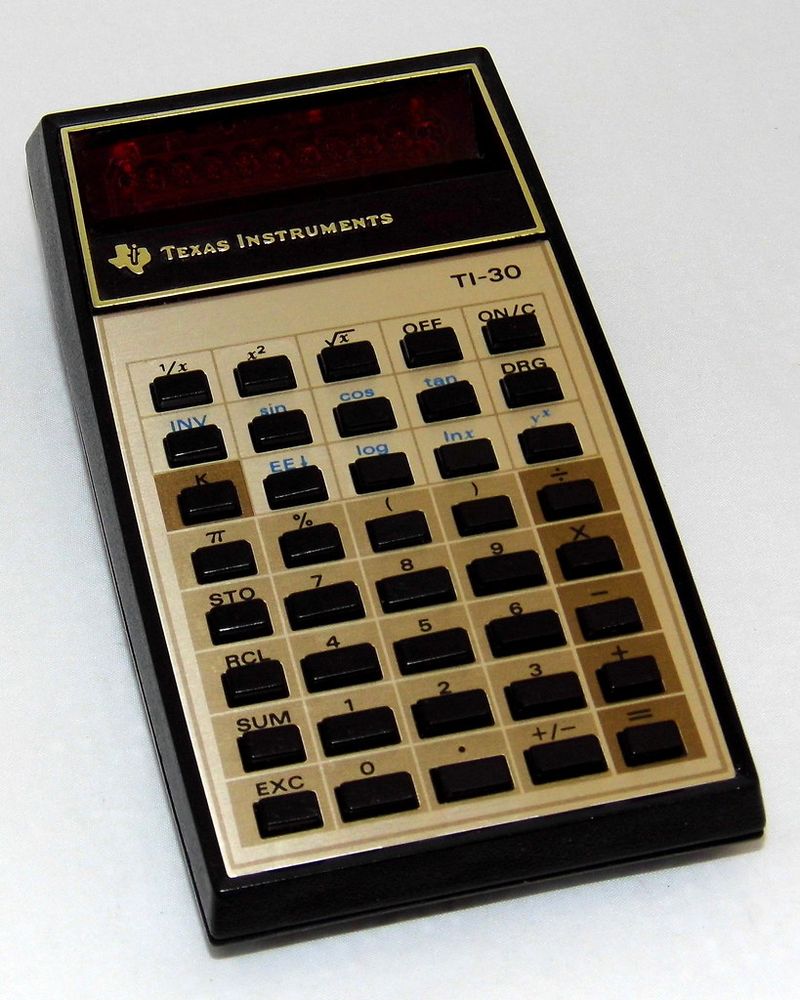

The First Handheld Electronic Calculator (1967)

Texas Instruments engineer Jack Kilby, the same brilliant mind behind the integrated circuit, was also part of the team that built the first handheld electronic calculator prototype in 1967. It was called the Cal Tech and could add, subtract, multiply, and divide.

It printed results on a paper tape, which feels adorably retro now.

Queen Elizabeth II was 41 that year, and math teachers everywhere had no idea their authority was about to be challenged. Before handheld calculators, people used slide rules, log tables, and a lot of mental effort to crunch numbers.

The Cal Tech prototype changed all of that in one compact device.

Commercial pocket calculators arrived in the early 1970s and caused genuine panic in schools about whether students would forget how to do arithmetic. Spoiler: some of us did.

By the mid-1970s, calculators were affordable enough for students to own. They became standard classroom tools and eventually got absorbed into every smartphone ever made.

Math was never quite the same again.

ARPANET, the Forerunner of the Internet (1969)

The first message ever sent over ARPANET on October 29, 1969, was supposed to be the word LOGIN. The system crashed after just two letters, so the actual first internet message in history was LO.

Accidentally poetic, honestly. That stumbling start launched the network that eventually became the internet.

Queen Elizabeth II was 43 at the time, and the U.S. Department of Defense was funding a project that would reshape civilization.

ARPANET connected just four university computers at first: UCLA, Stanford Research Institute, UC Santa Barbara, and the University of Utah. Four nodes.

That is all it took to start the revolution.

The network was designed to survive a nuclear attack by rerouting data automatically, which explains its decentralized structure. That same structure is why the internet today has no single off switch.

By 1972, ARPANET had 37 nodes and was already being used for email. From a two-letter crash to a global network used by 5 billion people, the glow-up was historic.

The Microprocessor (1971)

The Intel 4004 was roughly the size of a fingernail and contained 2,300 transistors. When it launched in November 1971, it had the same computing power as the ENIAC, a room-filling computer from 1945 that weighed 27 tons.

Fitting that into a fingernail-sized chip was one of the most jaw-dropping engineering achievements ever pulled off.

Queen Elizabeth II was 45 years old when Intel quietly changed the world with this tiny rectangle. The 4004 was originally designed for a Japanese calculator company called Busicom, but Intel engineer Ted Hoff realized its potential went far beyond calculators.

He was correct in the most spectacular way possible.

The microprocessor made it possible to put computing power into almost anything: cars, appliances, medical devices, and eventually phones. Every digital device manufactured since the early 1970s owes its existence to that little chip.

The microprocessor did not just change technology. It changed what technology could be.

That is a very different and much bigger thing.

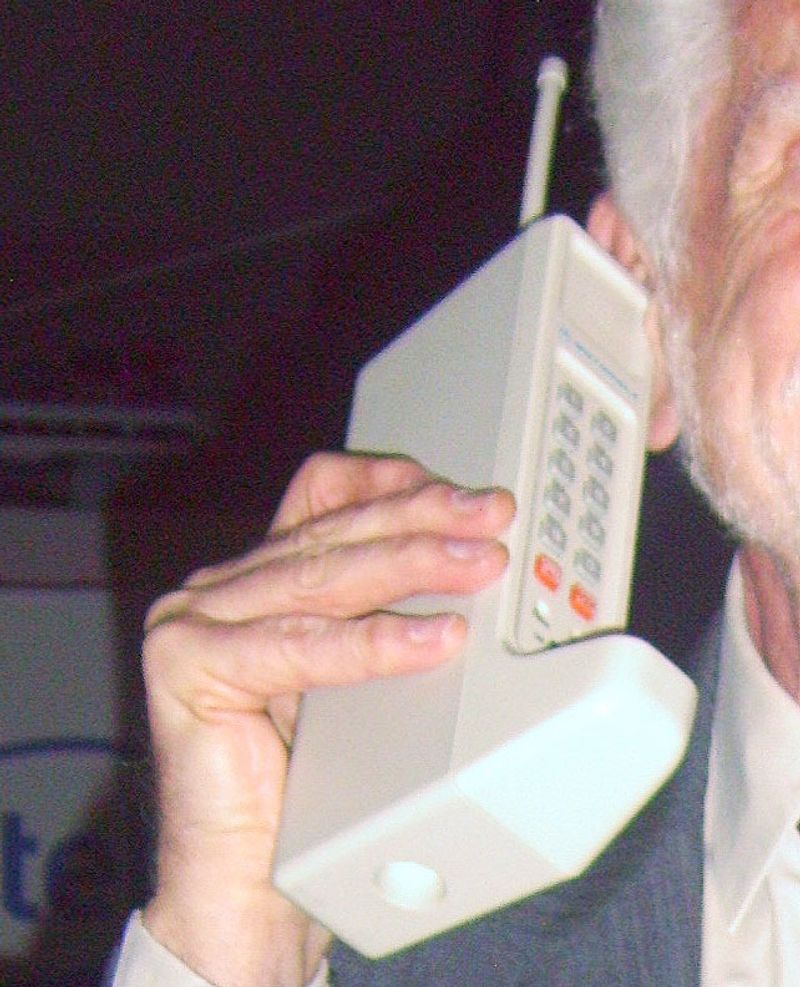

The Handheld Mobile Phone Call (1973)

Martin Cooper stepped onto a New York City street on April 3, 1973, and called a rival engineer at Bell Labs on the first handheld cellular phone. His opening line was essentially: I am calling you from a real handheld portable cellular phone.

The rival reportedly went silent. That silence was priceless.

Queen Elizabeth II was 47 years old that day, and the mobile phone era had just officially begun. The Motorola DynaTAC prototype Cooper used weighed about 1.1 kilograms and was 23 centimeters long.

It offered 30 minutes of talk time before needing a 10-hour recharge. Practical it was not, yet.

Commercial mobile phones did not reach consumers until 1983, and even then they were expensive enough to be status symbols rather than everyday tools. The journey from Cooper’s brick phone to the sleek smartphone in your pocket took about 40 years of relentless engineering.

Over 6.8 billion people now own mobile phones. That one phone call on a New York sidewalk truly did start everything.

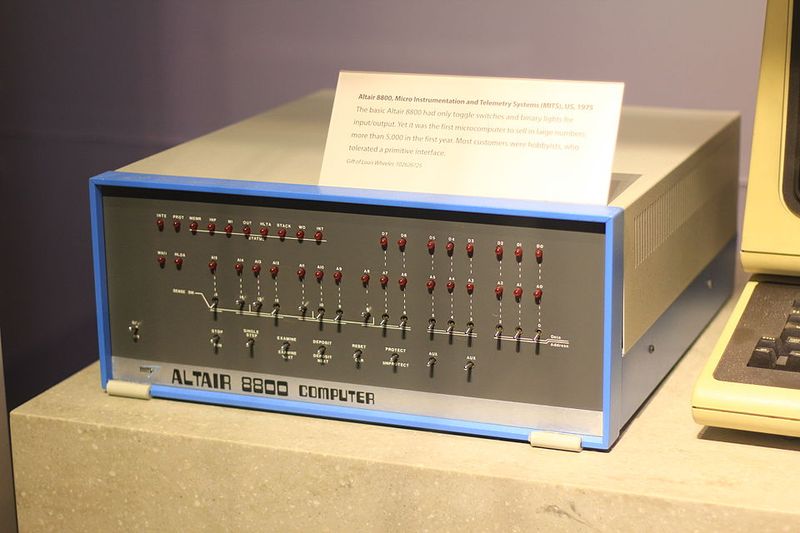

The Personal Computer Revolution (1975)

The MITS Altair 8800 appeared on the cover of Popular Electronics in January 1975 with the headline: World’s First Minicomputer Kit to Rival Commercial Models. It had no keyboard, no monitor, and no software.

You programmed it by flipping switches. And yet thousands of hobbyists ordered one immediately.

People were hungry for personal computing before it even existed properly.

Queen Elizabeth II was 49 that year, and two young men named Bill Gates and Paul Allen read that magazine cover and immediately called MITS to offer software. That phone call led to Microsoft.

The Altair did not just launch a product category. It launched an entire industry and several fortunes.

The personal computer revolution that followed transformed work, education, communication, and entertainment beyond recognition. By the 1980s, IBM and Apple had made computers genuinely user-friendly.

The Altair itself was clunky and difficult, but it proved ordinary people wanted computers at home. Sometimes the most important inventions are the ones that simply prove demand exists.

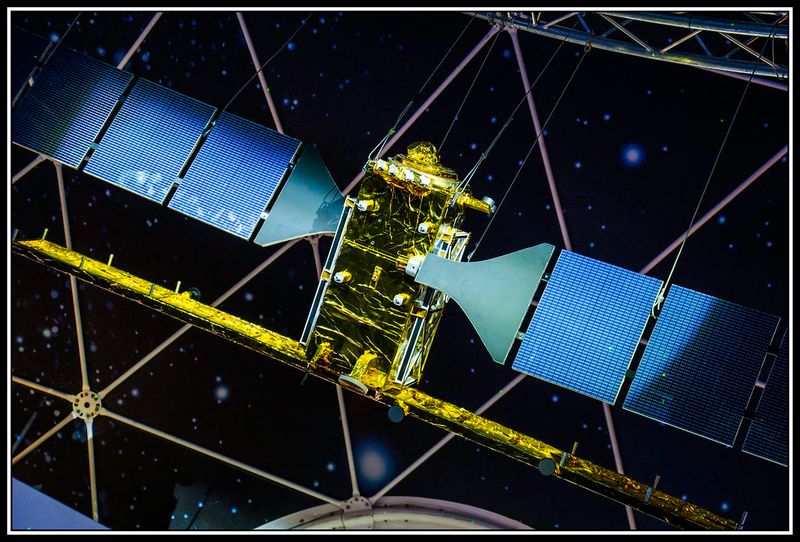

GPS Satellites (1978)

Getting lost used to be a genuine life skill. Before GPS, people navigated with paper maps, road atlases, and occasionally by stopping to ask strangers for directions.

The first Navstar GPS satellite launched on February 22, 1978, and the slow dismantling of map-reading as a necessary human ability had begun.

Queen Elizabeth II was 51 years old when that first satellite went up. The full GPS constellation required 24 satellites and was not fully operational until 1995.

The U.S. military developed it primarily for defense purposes but eventually opened it to civilian use, which turned out to be one of the most generous technological gifts in history.

GPS now guides everything from delivery trucks to hikers to aircraft. It underpins financial transactions, emergency services, and scientific research.

I once drove confidently into a lake following GPS instructions, so I can personally confirm the technology is not entirely foolproof. Still, the world without GPS navigation is genuinely hard to picture now.

Forty-six years of satellites have made getting lost almost optional.

The World Wide Web (1989)

Tim Berners-Lee handed his boss at CERN a proposal in March 1989 titled Information Management: A Proposal. His boss wrote Vague but exciting on the cover and handed it back.

That vague but exciting scribble gave the green light to the invention that changed literally everything about modern life. The World Wide Web was born from a physics lab memo.

Queen Elizabeth II was 63 years old that year, and the internet already existed as a network of computers. But Berners-Lee added something crucial: a system of hyperlinks and web pages that made information accessible to anyone, not just technical experts.

The difference between the internet and the web is exactly that human-friendly layer.

The first website went live on August 6, 1991, and it explained what the World Wide Web was. Meta and wonderful simultaneously.

Today there are over 1.9 billion websites. The Queen lived to see the web go from a CERN experiment to the backbone of global civilization.

Not bad for something that started as vague but exciting.